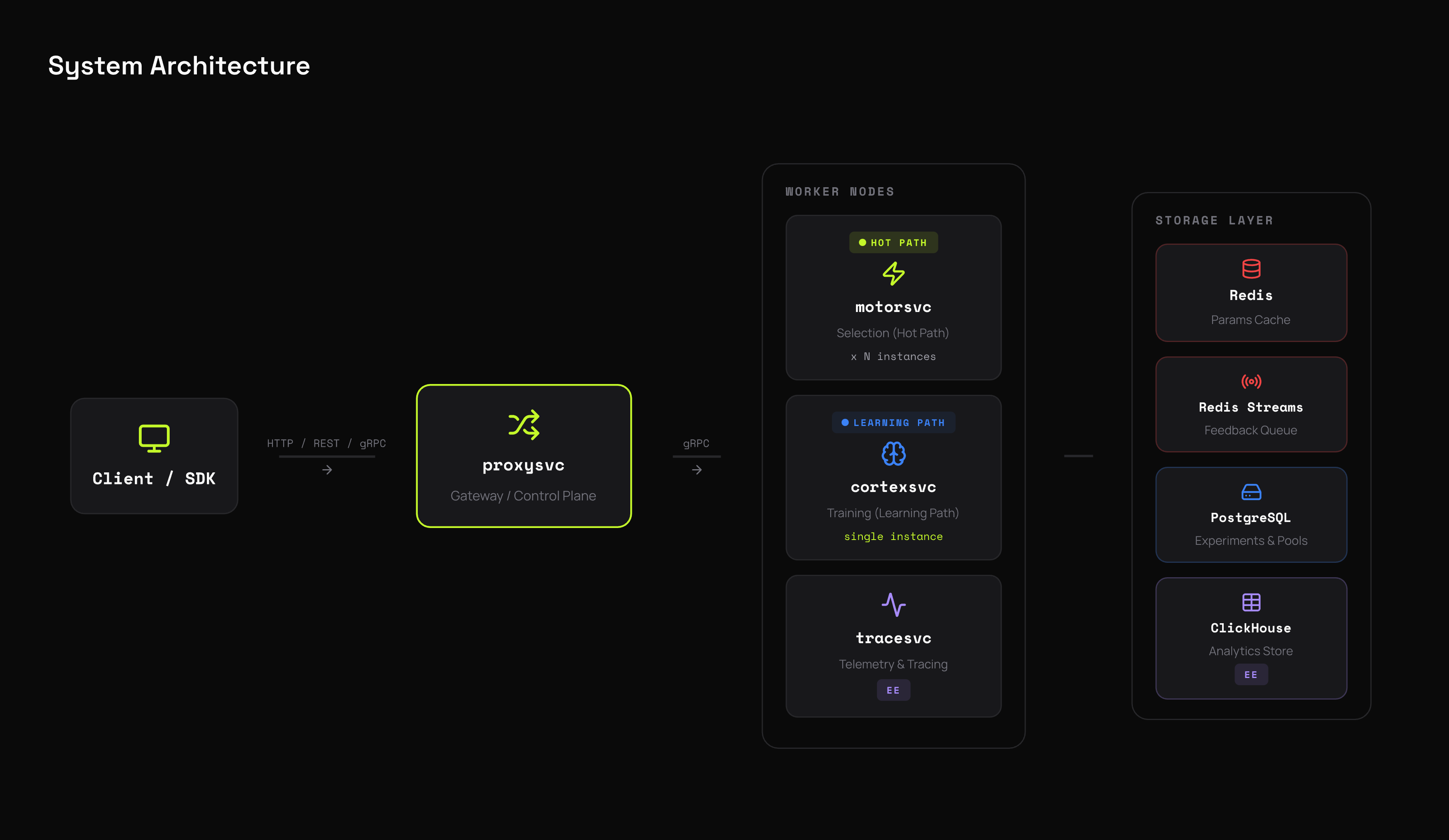

Architecture

qbrix separates the hot path (arm selection) from the learning path (training). qbrix scales selection independently from training, achieving ultra-low latency decisions with eventual consistency in parameter updates.

System Overview

| Component | Role | Scaling |

|---|---|---|

| proxysvc | Gateway — HTTP + gRPC entry point, experiment/pool management, auth, feature gates | Horizontal (HPA) |

| motorsvc | Selection — reads cached params, runs policy, returns arm | Horizontal (HPA) |

| cortexsvc | Training — consumes feedback, runs batch training, writes updated params | Single instance |

| Redis | Param cache (read by motorsvc), feedback queue (Redis Streams) | Managed service |

| Postgres | Experiments, pools, users, feature gates, API keys | Managed service |

| ClickHouse | Selection/feedback traces, experiment analytics | Enterprise only |

Request Flow

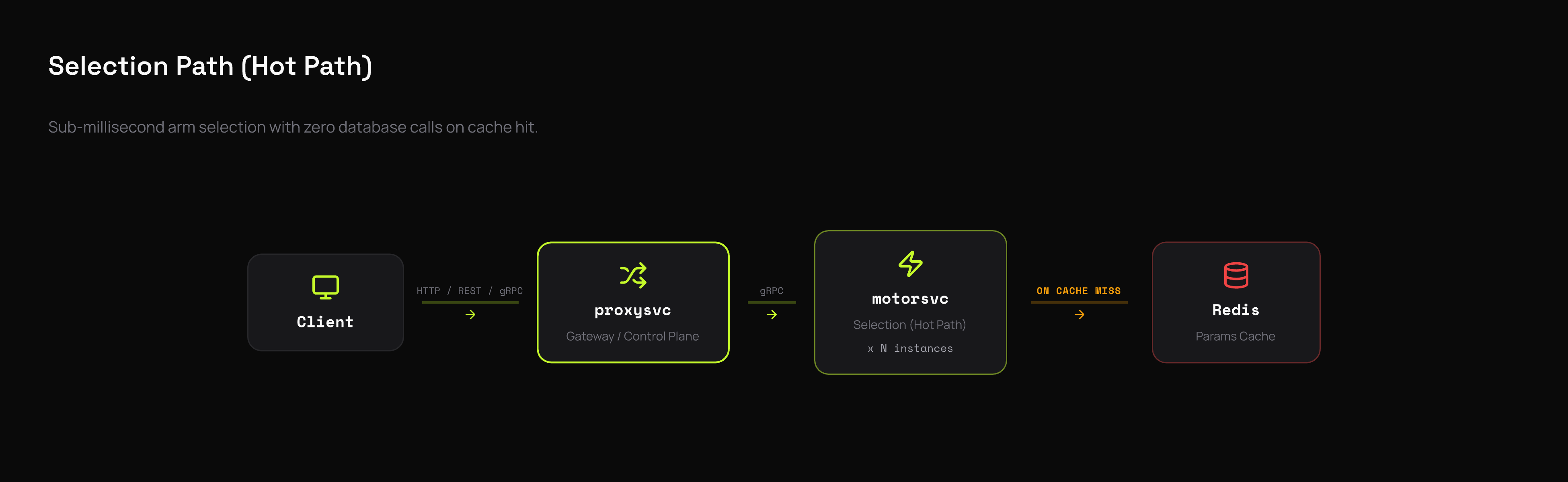

Selection (Hot Path)

- proxysvc authenticates the request (JWT or API key)

- Feature gate evaluates rollout, schedule, and rules

- If the gate commits an arm, return immediately (no bandit call)

- Otherwise, forward to motorsvc via gRPC

- motorsvc checks agent cache (300s TTL), then param cache (60s TTL)

- On cache hit: run policy

select()in-memory — zero I/O - On cache miss: fetch from Redis, populate cache, then select

- Return signed selection token + arm details

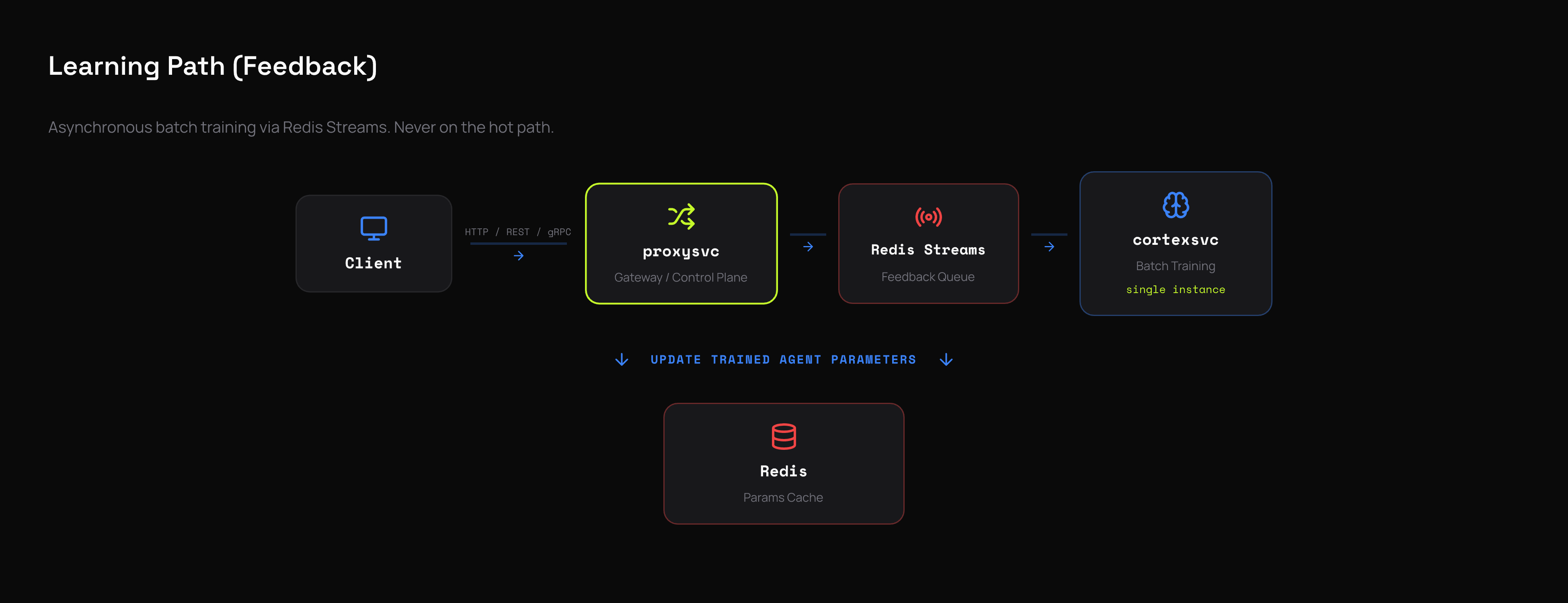

Feedback (Learning Path)

- proxysvc validates the signed selection token (HMAC)

- Publishes feedback event to Redis Streams

- cortexsvc consumes in batches (256 events, 100ms timeout)

- Dispatcher routes events to per-experiment worker queues

- Workers call

policy.train()with the batch - Updated params written to Redis

- motorsvc picks up new params on next cache expiry

Optimized for Performance, Reliability, and Uptime

Stateless hot path

motorsvc does zero database calls during selection. It reads only from in-memory caches, running the policy's select() method purely in-process. No locks, no I/O on the critical path when caches are warm. This is what enables ultra-low latency selection.

Two-level gate cache

Feature gate configs are cached in a two-level hierarchy:

- L1 (in-memory): microsecond access, 30s TTL, 1000 entries max

- L2 (Redis): millisecond access, 300s TTL

A gate evaluation never hits Postgres. L1 misses fall through to L2; L2 misses return None (safe fallback to bandit selection).

Dual param caching

motorsvc maintains two separate caches via cachebox:

- Agent cache (300s TTL, 100 entries) — pre-built Agent objects with policy instance

- Param cache (60s TTL, 1000 entries) — learned policy parameters

The agent cache has a longer TTL because policy structure changes rarely. The param cache refreshes more often to pick up training updates.

gRPC with tuned thread pools

All inter-service communication uses gRPC (binary protocol, HTTP/2 multiplexing). Each service runs a 100-thread gRPC pool (up from the default 10) with keepalive settings tuned to prevent connection timeouts during traffic spikes.

Redis Streams back-pressure

Feedback events flow through Redis Streams with consumer groups:

- Consumer groups prevent duplicate processing across restarts

maxlenapproximate limit prevents unbounded stream growthxack+xdelafter successful training ensures exactly-once semantics- Stream naturally buffers during traffic spikes — cortexsvc processes at its own pace without affecting selection latency

Multi-worker training dispatcher

cortexsvc uses a 4-worker parallel dispatcher with per-experiment async queues:

- Events are routed to experiment-specific queues

- Each worker drains one experiment's queue completely before moving on

- This prevents head-of-line blocking — a slow-training experiment doesn't hold up others

- Active experiment tracking prevents duplicate concurrent training

Pending message recovery

On startup, cortexsvc runs xautoclaim to recover messages that were in-flight during a previous crash. Messages claimed by a dead consumer are automatically re-assigned. No feedback events are lost, even during unclean shutdowns.

Fail-safe gate evaluation

If a feature gate evaluation fails for any reason — bad config, missing metadata, unexpected error — it returns None and falls through to normal bandit selection. The system never blocks a request due to a gate misconfiguration. This is a deliberate design choice: availability over correctness for gate logic.

Stateless feedback correlation

Selection responses include an HMAC-signed token that encodes: tenant ID, experiment ID, arm index, context ID, vector, and metadata. When feedback arrives, the token is verified and decoded — no server-side session state is needed to correlate feedback with selections. This means motorsvc can scale horizontally without shared state.

Rate limiting at the edge

Sliding window counters in Redis protect downstream services from traffic spikes. Rate limiting applies only to operational endpoints — /api/v1/agent/select and /api/v1/agent/feedback (HTTP), Select and Feedback (gRPC). Management endpoints (pools, experiments, gates, auth) are not rate-limited. Per-user and per-API-key limits are configurable by plan tier. Counters auto-expire after 120 seconds.

Service Details

proxysvc (Gateway)

- Protocols: HTTP/REST (FastAPI) + gRPC

- Responsibilities: Auth, experiment/pool CRUD, feature gate evaluation, feedback publishing, rate limiting

- Storage: Postgres (experiments, pools, users, gates, API keys)

- Stateless: Scales horizontally via HPA

motorsvc (Selection)

- Protocol: gRPC

- Responsibilities: Arm selection only — nothing else

- Storage: Redis (read-only, via cache)

- Stateless: No mutations on the hot path, scales horizontally

- Caches: Agent (300s) + Param (60s), both in-memory

cortexsvc (Training)

- Protocol: gRPC

- Responsibilities: Consume feedback, batch train policies, write updated params

- Storage: Redis Streams (consume), Redis (write params)

- Single instance: Event sourcing pattern — sequential processing ensures correct parameter updates

- Dispatcher: 4 workers, per-experiment queues, batch size 256, 100ms timeout, 10s flush interval

tracesvc (Enterprise)

- Responsibilities: Consume selection + feedback events, persist to ClickHouse

- Storage: Redis Streams (consume), ClickHouse (write)

- Analytics: Total selections, default vs. ML selections, timeseries, per-arm stats